In this section, we discuss convergence properties in detail. First the SI is reviewed in the context of the Lanczos algorithm. Next a convergence theory of two-sided algorithms for nonsymmetric eigenvalue problems is outlined with reference to other sections of this work. Finally techniques are reviewed to cope with the unavailability of normalized residuals.

The Ritz values tend to converge first to eigenvalues at the boundary of the

convex hull of the spectrum. To compute interior eigenvalues it is often

worthwhile to apply a spectral transformation to ![]() . The strategy is to

accelerate the convergence rate by mapping the desired eigenvalues in the

complex plane to the exterior of the transformed spectrum. The standard

approach is to apply the Lanczos algorithm to the shifted and inverted matrix

. The strategy is to

accelerate the convergence rate by mapping the desired eigenvalues in the

complex plane to the exterior of the transformed spectrum. The standard

approach is to apply the Lanczos algorithm to the shifted and inverted matrix

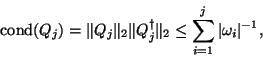

An inherent difficulty of a NHEP is that the known bounds on the

distance from

![]() to an eigenvalue of

to an eigenvalue of ![]() are not practical to

compute [425,256]. Another complication is that, to be of use, a

convergence theory for two-sided algorithms must account for the fact that the left

and right Krylov subspaces simultaneously approximate eigenvalues of

are not practical to

compute [425,256]. Another complication is that, to be of use, a

convergence theory for two-sided algorithms must account for the fact that the left

and right Krylov subspaces simultaneously approximate eigenvalues of ![]() . These two

problems are resolved by bounding the distance,

. These two

problems are resolved by bounding the distance, ![]() , to the nearest matrix,

, to the nearest matrix, ![]() ,

with eigentriplet

,

with eigentriplet

![]() . Eigenvalue sensitivities

(condition numbers) are also available.

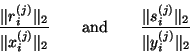

Recall that residual vectors

. Eigenvalue sensitivities

(condition numbers) are also available.

Recall that residual vectors ![]() and

and

![]() as defined in (7.40) and (7.41).

Next observe that

the biorthogonality condition (7.37) implies that

as defined in (7.40) and (7.41).

Next observe that

the biorthogonality condition (7.37) implies that

![]() .

Together these relations imply that

.

Together these relations imply that

There is a subtle yet critical complication concerning testing the Ritz

vectors for convergence. The approximate eigenvectors of the original

matrix ![]() are only calculated after the test in step (16) suggests the

convergence of Ritz values

are only calculated after the test in step (16) suggests the

convergence of Ritz values

![]() to the desired eigenvalues

of

to the desired eigenvalues

of ![]() . If the Lanczos vectors are stored, then the bases

. If the Lanczos vectors are stored, then the bases ![]() and

and ![]() are used to get the approximate eigenvectors. If the Lanczos vectors are

not stored, then there are two possibilities [93]: one either

reruns the three-term recurrences to generate the requested eigenvectors,

or applies inverse iteration (see §7.4).

The complication is that the decision whether or not to accept a Ritz vector

as an approximate eigenvector cannot be based on these unnormalized residuals.

After

are used to get the approximate eigenvectors. If the Lanczos vectors are

not stored, then there are two possibilities [93]: one either

reruns the three-term recurrences to generate the requested eigenvectors,

or applies inverse iteration (see §7.4).

The complication is that the decision whether or not to accept a Ritz vector

as an approximate eigenvector cannot be based on these unnormalized residuals.

After

![]() and

and

![]() are computed, it is necessary to compare the normalized residuals

are computed, it is necessary to compare the normalized residuals

The second common cause of shrinkage is best explained in terms of

implementations of the Lanczos algorithm in which ![]() and

and ![]() are

scaled to have unit Euclidean

length [179,354,105]. The inner products

are

scaled to have unit Euclidean

length [179,354,105]. The inner products

It is common for

![]() to decrease nearly

monotonically and by many orders of magnitude over hundreds of Lanczos

steps [179,105].

And in this case the unnormalized residuals are poor estimates for

Ritz values that do not appear until after

to decrease nearly

monotonically and by many orders of magnitude over hundreds of Lanczos

steps [179,105].

And in this case the unnormalized residuals are poor estimates for

Ritz values that do not appear until after ![]() has decreased

by several orders of magnitude. More precisely, for eigenvectors

has decreased

by several orders of magnitude. More precisely, for eigenvectors ![]() with the special property that

with the special property that

![]() is negligible, the lower bound

is negligible, the lower bound