|

Version 3.12.1 |

|

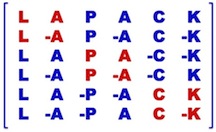

LAPACK is a software package provided by Univ. of Tennessee; Univ. of California, Berkeley; Univ. of Colorado Denver; and NAG Ltd..

Presentation

LAPACK is written in Fortran 90 and provides routines for solving systems of simultaneous linear equations, least-squares solutions of linear systems of equations, eigenvalue problems, and singular value problems. The associated matrix factorizations (LU, Cholesky, QR, SVD, Schur, generalized Schur) are also provided, as are related computations such as reordering of the Schur factorizations and estimating condition numbers. Dense and banded matrices are handled, but not general sparse matrices. In all areas, similar functionality is provided for real and complex matrices, in both single and double precision.

The original goal of the LAPACK project was to make the widely used EISPACK and LINPACK libraries run efficiently on shared-memory vector and parallel processors. On these machines, LINPACK and EISPACK are inefficient because their memory access patterns disregard the multi-layered memory hierarchies of the machines, thereby spending too much time moving data instead of doing useful floating-point operations. LAPACK addresses this problem by reorganizing the algorithms to use block matrix operations, such as matrix multiplication, in the innermost loops. These block operations can be optimized for each architecture to account for the memory hierarchy, and so provide a transportable way to achieve high efficiency on diverse modern machines. We use the term "transportable" instead of "portable" because, for fastest possible performance, LAPACK requires that highly optimized block matrix operations be already implemented on each machine.

LAPACK routines are written so that as much as possible of the computation is performed by calls to the Basic Linear Algebra Subprograms (BLAS). LAPACK is designed at the outset to exploit the Level 3 BLAS — a set of specifications for Fortran subprograms that do various types of matrix multiplication and the solution of triangular systems with multiple right-hand sides. Because of the coarse granularity of the Level 3 BLAS operations, their use promotes high efficiency on many high-performance computers, particularly if specially coded implementations are provided by the manufacturer.

Highly efficient machine-specific implementations of the BLAS are available for many modern high-performance computers. For details of known vendor- or ISV-provided BLAS, consult the BLAS FAQ. Alternatively, the user can download ATLAS to automatically generate an optimized BLAS library for the architecture. A Fortran 77 reference implementation of the BLAS is available from netlib; however, its use is discouraged as it will not perform as well as a specifically tuned implementation.

This material is based upon work supported by the National Science Foundation and the Department of Energy (DOE). Any opinions, findings and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the National Science Foundation (NSF) or the Department of Energy (DOE).

Software

Licensing

LAPACK is a freely-available software package. It is available from netlib via anonymous ftp and the World Wide Web at http://www.netlib.org/lapack . Thus, it can be included in commercial software packages (and has been). We only ask that proper credit be given to the authors.

The license used for the software is the modified BSD license, see:

Like all software, it is copyrighted. It is not trademarked, but we do ask the following:

-

If you modify the source for these routines we ask that you change the name of the routine and comment the changes made to the original.

-

We will gladly answer any questions regarding the software. If a modification is done, however, it is the responsibility of the person who modified the routine to provide support.

LAPACK, version 3.12.1

-

Download: lapack-3.12.1.tar.gz

-

Updated: January 8 2025

-

LAPACK GitHub Open Bug (Current known bugs)

Standard C language APIs for LAPACK

collaboration LAPACK and INTEL Math Kernel Library Team

-

LAPACK C INTERFACE is now included in the LAPACK package (in the lapacke directory)

-

Updated: November 16, 2013

-

header files: lapacke.h, lapacke_config.h, lapacke_mangling.h, lapacke_utils.h

LAPACK for Windows

LAPACK is built under Windows using Cmake the cross-platform, open-source build system. The new build system was developed in collaboration with Kitware Inc.

A dedicated website (http://icl.utk.edu/lapack-for-windows/lapack) is available for Windows users.

-

You will find information about your configuration need.

-

You will be able to download BLAS, LAPACK, LAPACKE pre-built libraries.

-

You will learn how you can directly run LAPACKE from VS Studio (just C code, no Fortran!!!). LAPACK now offers Windows users the ability to code in C using Microsoft Visual Studio and link to LAPACK Fortran libraries without the need of a vendor-supplied Fortran compiler add-on. To get more information, please refer to lawn 270.

-

You will get step by steps procedures Easy Windows Build.

GIT Access

The LAPACK GIT (http://github.com/Reference-LAPACK/lapack) repository is open for read-only for our users.

Support

Do not forget to check our GitHub repository for Current and Fixed issues and Improvements

Contributors

LAPACK is a community-wide effort.LAPACK relies on many contributors, and we would like to acknowledge their outstanding work. Here is the list of LAPACK contributors since 1992.

If you are wishing to contribute, please have a look at the LAPACK Program Style. This document has been written to facilitate contributions to LAPACK by documenting their design and implementation guidelines.

LAPACK Project Software Grant and Corporate Contributor License Agreement (“Agreement”) [Download]

Contributions are always welcome and can be sent to the LAPACK team.

Documentation

Release Notes

The LAPACK Release Notes contain the history of the modifications made to the LAPACK library between each new version.

Improvements and Bugs

LAPACK is a currently active project, we are striving to bring new improvements and new algorithms on a regular basis. Here is the list of the improvement since LAPACK 3.0.

Please contribute to our wishlist if you feel some functionality or algorithms are missing by emailing the LAPACK team.

Here is the list (corrected, confirmed and to be confirmed) since 3.6.1

Here is the list of the bugs (corrected, confirmed and to be confirmed) between LAPACK 3.0 and LAPACK 3.6.1

FAQ

Consult LAPACK Frequently Asked Questions.

Please contribute to our FAQ if you feel some questions are missing by emailing the LAPACK team.

The LAPACK discussion forum on Github is also a good source to find answers.

Browse, Download LAPACK routines with on-line documentation browser

Here you will be able to browse through the many LAPACK functions, and also download individual routine plus its dependency.

To access a routine, either use the search functionality or go through the different modules.

Users' Guide

Manpages

Please follow the instructions of the README to install the LAPACK manpages on your machine.

The LAPACK team would like to thank Sylvestre Ledru for helping us maintain those manpages and Albert from the Doxygen team.

LAWNS: LAPACK Working Notes

Release History

Version 1.0 : February 29, 1992

-

Revised, Version 1.0a: June 30, 1992

-

Revised, Version 1.0b: October 31, 1992

-

Revised, Version 1.1: March 31, 1993

Version 2.0: September 30, 1994

Version 3.0: June 30, 1999

-

Update, Version 3.0: October 31, 1999

-

Update, Version 3.0: May 31, 2000

Version 3.1.0: November 12, 2006

-

Version 3.1.1: February 26, 2007

Version 3.2: November 18, 2008

-

Version 3.2.1: April 17, 2009

-

Version 3.2.2: June 30, 2010

Version 3.3.0: November 14, 2010

-

Version 3.3.1: April 18, 2011

Version 3.4.0: November 11, 2011

-

Version 3.4.1: April 20, 2012

-

Version 3.4.2: September 25, 2012

Version 3.5.0: November 19, 2013

Version 3.6.0: November 15, 2015

-

Version 3.6.1: June 18, 2016

Version 3.7.0: December 24, 2016

-

Version 3.7.1: June 25 2017

Version 3.8.0: November 17, 2017

Version 3.9.0: November 21, 2019

-

Version 3.9.1: April 1, 2021

Version 3.10.0: June 28, 2021

-

Version 3.10.1: April 12, 2022

Version 3.11.0: November 11, 2022

Version 3.12.0: November 24, 2023

-

Version 3.12.1: January 8, 2025*

Previous Release

LAPACK, version 3.12.1

-

Download: lapack-3.12.1.tar.gz

-

Updated: Jan 8, 2025

LAPACK, version 3.12.0

-

Download: lapack-3.12.0.tar.gz

-

Updated: Nov 24, 2023

LAPACK, version 3.11.0

-

Download: lapack-3.11.0.tar.gz

-

Updated: Nov 11, 2022

LAPACK, version 3.10.1

-

Download: lapack-3.10.01.tar.gz

-

Updated: Apr 12, 2022

LAPACK, version 3.10.0

-

Download: lapack-3.10.0.tar.gz

-

Updated: Jun 28, 2021

LAPACK, version 3.9.1

-

Download: lapack-3.9.1.tar.gz

-

Updated: Apr 1, 2021

LAPACK, version 3.9.0

-

Download: lapack-3.9.0.tar.gz

-

Updated: Nov 21, 2019

LAPACK, version 3.8.0

-

Download: lapack-3.8.0.tar.gz

-

Updated: Nov 12, 2017

LAPACK, version 3.7.1

-

Download: lapack-3.7.1.tgz

-

Updated: June 25, 2017

LAPACK, version 3.7.0

-

Download: lapack-3.7.0.tgz

-

Updated: December 24, 2016

LAPACK, version 3.6.1

-

Download: lapack-3.6.1.tgz

-

Updated: June 18, 2016

LAPACK, version 3.6.0

-

Download: lapack-3.6.0.tgz

-

Updated: November 15, 2015

LAPACK, version 3.5.0

-

Download: lapack-3.5.0.tgz

-

Updated: November 19, 2013

LAPACK, version 3.4.2

-

Download: lapack-3.4.2.tgz

-

Updated: September 25, 2012

LAPACK, version 3.4.1

-

Download: lapack-3.4.1.tgz

-

Updated: April 20, 2012

LAPACK, version 3.4.0

-

Download: lapack-3.4.0.tgz

-

Updated: November 11, 2011

LAPACK, version 3.3.1

-

Download: lapack-3.3.1.tgz

-

Updated: April 18, 2011

LAPACK version 3.3.0

-

Download: lapack-3.3.0.tgz

-

Updated: November 14, 2010

LAPACK version 3.2.2

-

Download: lapack-3.2.2.tgz

-

Updated: June 30, 2010

LAPACK version 3.2.1

-

Download: lapack-3.2.1.tgz

-

Updated: April 17, 2009

LAPACK version 3.2 with CMAKE package

-

Download: lapack-3.2.1-CMAKE.tgz

-

Download: lapack-3.2.1-CMAKE.zip

-

Updated: January 26, 2010

LAPACK version 3.2

-

Download: lapack-3.2.tgz

-

Updated: November 18, 2008

LAPACK version 3.1.1 with manpages and html

-

Download: lapack-3.1.1.tgz

-

Updated: February 26, 2007

LAPACK version 3.1.1

-

Download: lapack-lite-3.1.1.tgz

-

Updated: February 26, 2007

LAPACK version 3.1

-

Download: lapack-lite-3.1.0.tgz

-

Updated: November 12, 2006

LAPACK version 3.0 + UPDATES

-

Download: lapack-3.0.tgz

-

Updated: May 31, 2000

LAPACK UPDATES for version 3.0

-

Download: update.tgz

-

Instructions: cd LAPACK; gunzip -c update.tgz | tar xvf -

-

Updated: May 31, 2000

Vendors LAPACK library

Please report to our FAQ to know the list of the current vendors implementations.

Related Projects

PLASMA

The Parallel Linear Algebra for Scalable Multi-core Architectures (PLASMA) project aims to address the critical and highly disruptive situation that is facing the Linear Algebra and High Performance Computing community due to the introduction of multi-core architectures.

PLASMA’s ultimate goal is to create software frameworks that enable programmers to simplify the process of developing applications that can achieve both high performance and portability across a range of new architectures.

The development of programming models that enforce asynchronous, out of order scheduling of operations is the concept used as the basis for the definition of a scalable yet highly efficient software framework for Computational Linear Algebra applications.

MAGMA

The MAGMA (Matrix Algebra on GPU and Multicore Architectures) project aims to develop a dense linear algebra library similar to LAPACK but for heterogeneous/hybrid architectures, starting with current "Multicore+GPU" systems.

The MAGMA research is based on the idea that, to address the complex challenges of the emerging hybrid environments, optimal software solutions will themselves have to hybridize, combining the strengths of different algorithms within a single framework. Building on this idea, we aim to design linear algebra algorithms and frameworks for hybrid manycore and GPUs systems that can enable applications to fully exploit the power that each of the hybrid components offers.

Related older Projects

LAPACK 95 by Jerzy Waśniewski

LAPACK extensions for high performance linear algebra computations. This version includes support for solving linear systems using LU, Cholesky, and QR matrix factorizations. lapack++ by Roldan Pozo

Subdirectory containing CCI (Call Conversion Interface) for LAPACK/ESSL. See lawn82 for more information.